RESEARCH.

|

New content coming soon!

Adaptive behaviour in a complex, dynamic and multisensory world poses some of the most fundamental computational challenges for the brain, notably inference, probabilistic/statistical computations, decision making, learning, binding and attention. To define the underlying computations and neural mechanisms in typical and atypical (e.g. neuropsychiatric disorders) populations my lab combines behavioural, computational modelling (Bayesian, neural network) and neuroimaging (fMRI, MEG, EEG, TMS).

Current research revolves around three research themes:

Multisensory integration across the cortical hierarchy and subcortical circuits

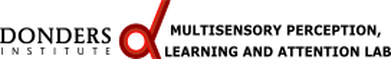

Accumulating evidence has shown that multisensory influences are nearly ubiquitous occurring as early as primary areas in human neocortex as well as in subcortical circuits. This pervasiveness of multisensory interactions requires us to revise our current theories of sensory cortical organization. It raises important questions about the computational roles, functional contributions and behavioural relevance of multisensory interactions across the cortical hierarchy and in subcortical circuits. We characterize the spatiotemporal evolution of multisensory interaction across the cortical hierarchy with MEG/EEG and fMRI. Resolving multisensory and attentional influences across cortical depth with submillimeter fMRI at 7T provide insights into the circuitries and input-output computations.

Current research revolves around three research themes:

Multisensory integration across the cortical hierarchy and subcortical circuits

Accumulating evidence has shown that multisensory influences are nearly ubiquitous occurring as early as primary areas in human neocortex as well as in subcortical circuits. This pervasiveness of multisensory interactions requires us to revise our current theories of sensory cortical organization. It raises important questions about the computational roles, functional contributions and behavioural relevance of multisensory interactions across the cortical hierarchy and in subcortical circuits. We characterize the spatiotemporal evolution of multisensory interaction across the cortical hierarchy with MEG/EEG and fMRI. Resolving multisensory and attentional influences across cortical depth with submillimeter fMRI at 7T provide insights into the circuitries and input-output computations.

Gau R, Bazin PL, Trampel R, Turner R, Noppeney U. (2020) Resolving multisensory and attentional influences across cortical depth in sensory cortices. Elife 8;9:e46856. doi: 10.7554/eLife.46856

Solving the binding or causal inference problem and attention control

|

In busy traffic, our senses are inundated with myriads of signals: the noise of a truck passing by at high speed, flashing traffic lights, the smell of fumes and the sight of other pedestrians. When deciding whether to cross the street, we should estimate the truck’s speed by integrating motion information from audition and vision, and yet avoid misperceiving the truck as talking and flashing. Multisensory perception in complex environments thus relies on solving the binding or causal inference problem - determining whether signals come from common sources and should hence be integrated or else be treated independently. Bayesian causal inference accounts for this binding problem by explicitly modelling the potential causal structures (here: common vs. independent sources) that could have generated the sensory signals. We have shown that the brain performs causal inference by dynamically encoding multiple perceptual estimates across the cortical hierarchy. Currently, we ask how the brain accomplishes this feat in complex naturalistic scenarios, for instance at the cocktail party with multiple speakers. In the face of the brain’s limited computational resources we hypothesize that attentional mechanisms are critical to approximate these optimal solutions for progressively more complex scenarios.

|

Mihalik A, Noppeney U (2020) Causal inference in multisensory perception. J Neurosci. 40(34):6600-6612

Rohe T, Ehlis AC, Noppeney U (2019) The neural dynamics of hierarchical Bayesian causal inference in multisensory perception. Nat Commun. 10(1):1907.

Aller M, Noppeney U (2019) To integrate or not to integrate: Temporal dynamics of hierarchical Bayesian Causal Inference. Plos Biology. 17(4):e3000210.

Rohe T, Ehlis AC, Noppeney U (2019) The neural dynamics of hierarchical Bayesian causal inference in multisensory perception. Nat Commun. 10(1):1907.

Aller M, Noppeney U (2019) To integrate or not to integrate: Temporal dynamics of hierarchical Bayesian Causal Inference. Plos Biology. 17(4):e3000210.

Adaptive behaviour in a dynamic multisensory environment

Adapting dynamically to changes in the world around us and the sensorium of the body is a fundamental challenge facing the brain throughout lifespan. Critically, changes can evolve across multiple timescales. Adaptive behaviour to rapid contextual changes requires the brain to flexibly adjust its expectations as formalized by Bayesian priors. Other changes in our sensorium are more subtle and evolve slowly such as the inter-aural or inter-ocular separation as a result of physical growth during neurodevelopment. These changes continuously alter the sensory cues that guide the construction of neural representations, for instance of the space around us. Finally, the brain faces situations where it needs to learn a novel mapping between sensory cues such as in neural prosthesis, sensory substitution or man-machine systems. We combine computational modelling and neuroimaging to characterize how the brain adapts dynamically to a changing multisensory world across multiple timescales.

Beierholm U, Rohe T, Ferrari A, Stegle O, Noppeney U. (2020) Using the past to estimate sensory uncertainty. eLife. e54172. doi: 10.7554/eLife.54172.